By Mathieu Boudreau

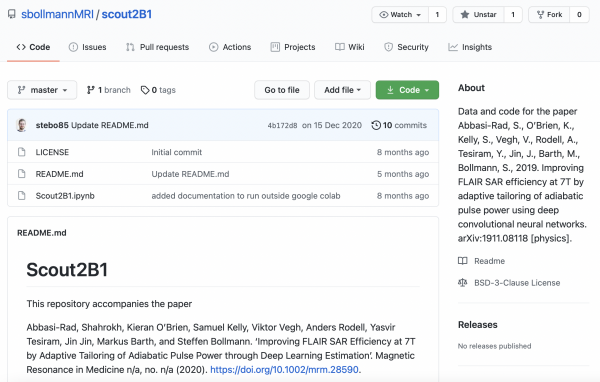

The April 2021 MRM Highlights Reproducible Research Insights interview is with Shahrokh Abbasi-Rad, Markus Barth and Steffen Bollmann, researchers at The University of Queensland, Brisbane, Australia. Their paper is entitled “Improving FLAIR SAR efficiency at 7T by adaptive tailoring of adiabatic pulse power through deep learning B1+ estimation”, and it was chosen as this month’s Reproducible Research pick because they made their training dataset and code available for the benefit of the MR community, and also shared a Jupyter Notebook example on Google Colab. To learn more about this team and their research, check out our recent interview with them.

General questions

1. Why did you choose to share your code/data?

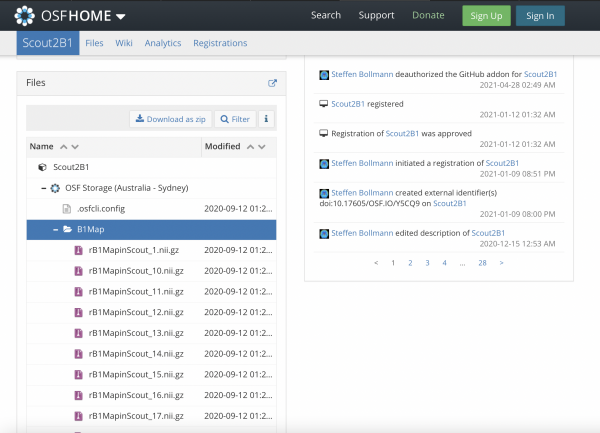

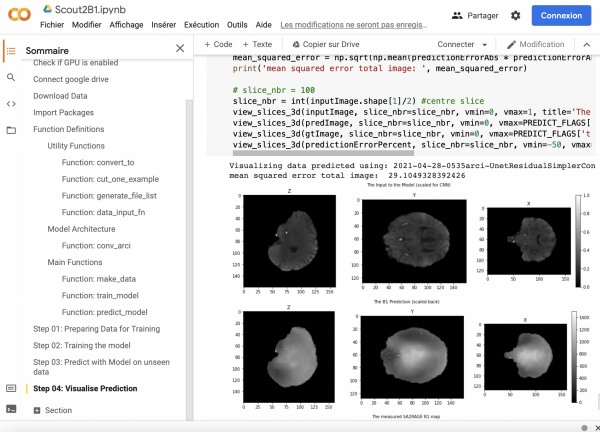

In this particular project, we shared our deep learning implementation with readers in the hope it might inspire other researchers to take our idea further, improve the current implementation, and find new applications. One of our reviewers was also very curious, and even quite skeptical, about the exact deep learning implementation. We therefore decided, during the revision process, that it would be a good idea to share the code and data in a readily accessible way so that anyone can test the model we trained and used in the manuscript, and also train new models with their own data. The model we trained for this manuscript is most likely specific to our head coil, and we are keen to see if others are able to acquire their own data based on our example and train better models. To avoid any difficulties with setting up TensorFlow and with the need for a graphics processing unit (GPU), we provided the code in a Google Colab notebook, while the data are stored on the Open Science Foundation (OSF) platform, which allows programmatic access to the data in the notebook.

2. What is your lab or institutional policy on code/data sharing?

The University of Queensland (UQ) doesn’t just encourage code and data sharing, it actively supports this process on all levels: UQ has built up a unique management system (https://research.uq.edu.au/rmbt/uqrdm) for all our research data, which provides collaborative storage for all research projects and makes data sharing possible from the start of the project. Internally, in our lab, we use GitLab to version control code for our projects, so people in our group are already used to managing their source code in git. From there, the additional step to publishing code openly is small. We encourage everyone to publish their code openly, but it’s not mandatory, and is sometimes not even possible (e.g., when working with code under vendor agreements or when working with patient data). Although we try to share as much as we can, it is impossible to share everything as it takes such a long time to curate the data (e.g., de-identify the human data, documentation/metadata) and clean up the code to make it useful for others. However, we have been doing this for a few years now, and have come to appreciate a further benefit of sharing curated data and code with a publication: by doing so, we make it much easier for ourselves to use our own previous work. To illustrate this point, I can tell you that the worst collaborator I have ever had was myself 5 years ago! I was asked to reanalyze data from a publication for a review paper and it took me ages to get all the R-scripts running again; even though I thought I had documented everything well, it wasn’t at all easy. That experience definitely changed my mind about code and data sharing.

3. At what stage did you decide to share your code/data? Is there anything you wish you had known or done sooner?

We decided to share the deep learning implementation during the revision stage of the paper because, as mentioned before, one reviewer was very skeptical about the deep learning part. We also looked at sharing the rest of the code, but since it’s a mix of MATLAB and vendor-specific code that was tightly integrated with other projects and external dependencies that we are not allowed to publish, sharing it would have been very time consuming and entailed a lot of re-implementation. We took preparing the deep learning code for this publication as an opportunity to refactor this part quite heavily, and we went through the code again in a pair-programming style. Interestingly, we found multiple details that we hadn’t described correctly in the manuscript, or points where we had specified variables that were no longer used or had subsequently been overwritten. These bugs didn’t change the results, but it was scary to see how easy it is to miss things in the code that could potentially lead to rejection of the paper. One lesson we learned from this, and it is an aspect we are focusing on right now, is the importance of integrating refactoring earlier on in the process, using linters to improve code quality, and we are also integrating unit testing into our workflows.

4. How do you think we might encourage researchers in the MRI community to contribute more open-source code along with their research papers?

Highlighting reproducible research is already helping a lot! Unfortunately, there are still many people in recruiting and promotion panels that do not see the value of spending time on this, but adding these MRM Highlights to our CVs will help tremendously to show the importance of it. I am not a big fan of making open-source code and data sharing mandatory, as to do so would create problems for researchers working with vendor-specific code and patient data. At the same time, I believe that just dumping code on GitHub and adding a link to it in the paper is not that useful. I think there are multiple reasons why people don’t share their code and maybe helping to address these issues might encourage more code sharing. Research code, for example, is very often just a proof of concept and likely contains many bugs, so sharing it would require a lot of extra work. If this work is not appreciated by reviewers and the MRI community (or by promotion panels!), then we can hardly expect everyone to be prepared to do it. Maybe reviewers should ask for code more often, where it’s reasonable to do so. Another problem is that some people are not familiar with version control systems, and sharing code can therefore require a few extra steps. Also, as we saw in this project, that de-identifying data is not that straightforward when using non-standard sequences for example; indeed, we had to skull-strip the data to make it shareable, because de-identifying algorithms failed. Maybe ISMRM could add a teaching session on reproducible research practices covering version control, data anonymization, data publication and unit testing? We had a great secret session on this topic in Montreal, with a fully packed room, but maybe these concepts should become less of a secret?

Questions about the specific reproducible research habit

1. What advice do you have for people who would like to use Jupyter Notebooks and Google Colab?

Google Colab is a free service with a paid optional component. It’s a full Jupyter environment with all deep learning packages pre-installed and configured, and it provides a GPU free of charge for a few hours (longer in the version where you pay for it). However, there are a few issues with it that we had to work around, and this information might be useful for others as well. The first problem to consider is the fact that this service might not be available forever, as Google has been known to discontinue projects suddenly. We therefore made sure we kept everything in the notebook independent of Google Colab, so it could run on any Jupyter server. We were also careful not to use the direct link to Google Colab in our publication, but instead used the OSF platform to store the link to the notebook. This will give us the option of changing the link later should Google Colab cease to exist. The OSF has a preservation fund and also provides digital object identifiers, so we can be pretty certain this service will be around for the coming decades. The next problem is that Google Colab cannot store data, so we had to come up with a way of reliably downloading the data into the notebook. Again, the OSF came to our rescue: it provides free storage and also integrates with institutional storage providers. There is a great open-source project that enables command line access to data on the OSF platform and we used this to download the data into the Google Colab notebook. If anyone wants to know about this in more detail, I wrote a short blog post about it last year: https://mri.sbollmann.net/index.php/2020/05/27/google-colab-osf.

2. Did you encounter any challenges or hurdles during the process of developing your notebook examples for GPU computing?

Yes. We initially developed our model on a Tesla K40 GPU and optimized it on V100 GPUs (kindly provided by Oracle Cloud), but the free GPUs provided by Google sometimes have less memory. We therefore had to tweak our model to train on the free Google Colab GPUs. Another problem we had to solve while developing this code was that we ran out of free GPU time and were blocked by Google. We found a good workaround, which might also be of interest to people who want to process local data using Google Colab without uploading them to the cloud (e.g., due to privacy concerns): Google Colab can connect to a Jupyter server that is running locally. Exploiting this feature, we were able to connect Google Colab to our local GPU and continue developing without any issues.

3. What questions did you ask yourselves when preparing your code and data for sharing?

We looked at all our code and data in order to try and decide what might be useful for others. We were unable to share all the code and data as this would have taken up too much time during the revision stage; also, we can’t share the scanner-specific implementation. We wanted to be sure that what we shared can be used directly by others without too much hassle. These are the considerations that led us to share the deep learning parts of the paper via Google Colab.

4. Are there any other reproducible research habits that you didn’t use for this paper but might be interested in trying in the future?

We didn’t do any automatic testing of code in this project, but definitely saw the need for this when we came across multiple bugs during the refactoring. We’re now integrating automatic testing into new projects from the start. We also use containers a lot to keep our workflows portable between different computers, although we didn’t in this project as we opted for the Colab solution. One of our current projects is called NeuroDesk and this bundles our efforts in this space: We automatically build and test various neuroimaging containers (e.g., FSL, FreeSurfer, AFNI, Bart, Lcmodel, Minc) and develop a reproducible neuroimaging analysis platform. Finally, we used some MATLAB code in this project, but we are aiming to move towards open and high-performance languages (like Julia) to make our future projects even more reproducible and shareable with the community.