By Tommy Boshkovski

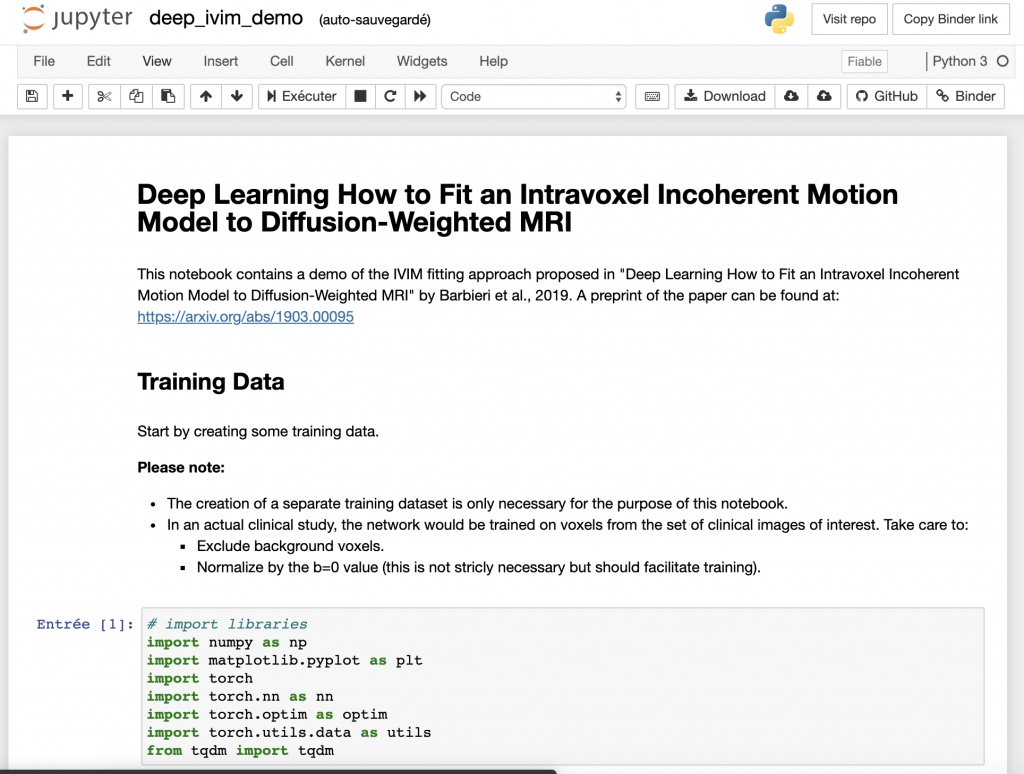

This month’s MRM Highlights Pick interview is with Sebastiano Barbieri, Oliver Gurney-Champion, and Harriet Thoeny, researchers at UNSW Sydney in Australia, Amsterdam UMC in The Netherlands, and Hôpital Cantonal Fribourgois, University of Fribourg in Switzerland. In a recent paper, they presented a deep learning approach for fitting an intravoxel incoherent motion (IVIM) model to diffusion-weighted MRI. They showed that their method is more efficient than the alternatives, and produces smoother parameter maps. This paper was chosen as this month’s Reproducible Research pick because as well as sharing their code, the authors also shared a nice demo in a Jupyter notebook (publication of the image data is currently in progress).

MRMH: Can you tell us a bit about yourselves and your backgrounds?

Sebastiano: I work on machine learning applications in the healthcare domain at the Centre for Big Data Research in Health at UNSW Sydney, and my background is in mathematics and computer science. I received a PhD from Jacobs University in Germany and the Fraunhofer MEVIS Institute for Digital Medicine. More recently, I completed a biostatistics degree at Macquarie University in Sydney.

Oliver: I’m currently an assistant professor at the Amsterdam University Medical Centers, affiliated with the University of Amsterdam, where I work on the development and implementation of quantitative MRI. However, most of the work for the paper was done during my time as a postdoc at The Institute of Cancer Research in London. Sebastiano and I got to know each other after I read his paper on Bayesian IVIM fitting and decided to contact him by email. Since then we have collaborated on multiple projects.

Harriet: I am a full professor of radiology at the University of Fribourg in Switzerland. I have many years of experience in quantitative MR imaging with diffusion-weighted MRI as my main focus. Sebastiano and I worked together for several years at the University of Bern. I think we made an excellent team because Sebastiano was always there to answer my difficult clinical questions.

MRMH: Can you give us a brief overview of your paper?

Sebastiano: The paper is about fitting IVIM parameters to diffusion-weighted MRI. To some extent, this is a mathematically ill-posed problem: a small variation in the input can have a large effect on the computed output. Many of the traditional methods used to compute IVIM parameters have been found to be insufficiently accurate, which has hampered the application of these methods in clinical practice. For this reason, we proposed a novel approach for IVIM parameter fitting based on deep learning. We found that this method led to competitive results in terms of accuracy and visually improved parametric maps on patient data.

Harriet: IVIM is a very useful method because it provides information on diffusion and perfusion simultaneously. In radiology, it would be very beneficial to have a method for obtaining information without administering any contrast agent. There are various clinical applications where it would be extremely helpful to use this method because it provides reproducible results; diffuse kidney disease is an example.

MRMH: What type of deep learning architecture did you use in your study?

Sebastiano: We used a feedforward backpropagation multilayer perceptron, which has been around since the 80s. It consists of an input layer, which takes the diffusion-weighted signal measured at different b-values, a series of hidden layers, and an output layer, which contains the estimated IVIM parameters. However, since this network actually forces the input signal to be represented in a more compact manner using the three IVIM parameters, it can also be seen as the encoder part of an autoencoder architecture.

Oliver: It’s a kind of auto encoder-decoder network, but in order to force the compressed signal in the bottleneck of the network to represent the IVIM parameters, we have replaced the decoder with the IVIM model. With this, the network then is forced to learn what IVIM parameters best fit the presented signal. The nice thing about auto encoder-decoders is that they can be trained without ground truth data.

MRMH: You note that the parameter maps generated by the model are surprisingly smooth in homogeneous tissue. How might you examine this further?

Oliver: This smoothness is interesting because the network does not know which voxels are neighbours in the image. It takes each voxel as a single independent input and predicts the IVIM parameters. It turns out that the parameters from the same tissue actually get similar values; the fact is that, although it sounds like this should be the case, in conventional IVIM fitting you actually often get very noisy maps with a lot of speckle; our approach mitigates that while otherwise having very similar parameter values. In the future, we would like to investigate whether the predicted IVIM parameter values are unique or whether there is a correlation between them. Additionally, we would like to look into a test-retest analysis and compare this to the effects of treatment response.

Harriet: As a radiologist, I think IVIM maps are more visually appealing. For day-to-day radiology tasks, the use of quantitative data is not always necessary. Instead, the information they provide can be used qualitatively. By simply examining parameter maps, it is possible to make inferences that are very useful clinically. For example, in the follow-up of a tumor, you can evaluate a treatment simply by looking to see whether it has produced a difference in the perfusion and diffusion map.

MRMH: You offer suggestions on how this method for IVIM fitting might be incorporated into clinical software despite the need for repeated training on different acquisition protocols. Do you foresee any other issues that would need to be resolved before its clinical use would be a viable option?

Sebastiano: One of the challenges with deep learning methods in general is that, depending on the initial random initialization of the network, you’ll often get different models. This is because the optimization algorithm will get trapped in some local minimum of the cost function instead of finding the global minimum. This generally isn’t an issue, since these minima appear to be mostly equivalent. However, sometimes training may go wrong, and therefore I believe that there is a need for additional quality checks after training. I don’t know whether this could be taken care of in an automated manner or whether radiologists would need to do it.

Oliver: This was a feasibility study where we have shown that this method worked well in the tested volunteers. I imagine such methods need thorough testing before widespread clinical use. That being said, if we compare our method to the alternatives (e.g. least squares of Bayesian approaches), our parameter maps look promising. What is also promising about our approach is that it is really fast. We could make it even faster if we accelerated it on a GPU, which is straightforward for deep learning. Also, you could pre-train the model on simulated data and have some basic start that guarantees you’re close to some optimum instead of training with a random initialization. This might also further improve its generalizability. I think all of these approaches could help to get the method to the clinic faster. However, this was a first feasibility study. That’s how I feel, at least, or maybe Sebastiano had big ideas for commercializing it?

Sebastiano: [laughs] I’m not so interested in commercialization. I, too, see this as an initial feasibility study. The method has to be evaluated prospectively in a larger cohort before we can actually start thinking about releasing it for daily clinical use.