By Mathieu Boudreau

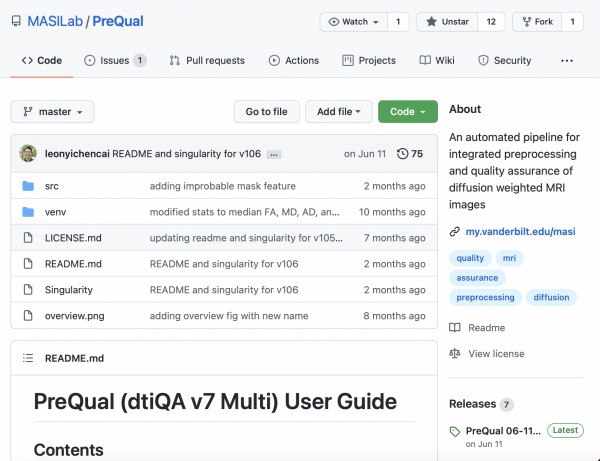

The July 2021 MRM Highlights Reproducible Research Insights interview is with Leon Y. Cai, Kurt G. Schilling, and Bennett A. Landman, researchers at Vanderbilt University in Nashville. Their paper is entitled “PreQual: An automated pipeline for integrated preprocessing and quality assurance of diffusion weighted MRI images”. It was chosen not only because the authors share their pipeline code with their paper, but also because they integrated emerging tools in their project that may be of interest to the MRM community, such as Singularity and BIDS. To learn more about this team and their research, check out our recent interview with them.

To discuss this Q&A, please visit our Discourse forum.

General questions

1. Why did you choose to share your code?

We believe that in order to help push the field toward more accurate and reproducible preprocessing, we need to be as open as possible with regard to our implementation. The best way to know what is happening to one’s data is to be able to interrogate the software applied to it, and being able to reproduce the results is the best way of establishing that a contribution to science is “real”. To address both of these aspects, we believe that releasing code and providing thorough documentation are extremely important steps, both for us and also for our colleagues—two things we sought to do with our release. Additionally, by allowing the field open access to our code, we hope that PreQual can become a collaborative effort, as additional preprocessing and QA tools are released.

2. What is your lab or institutional policy on code/data sharing?

In our lab, in general, whenever we are releasing code for public use (i.e., a new pipeline) we do open source as much as possible with containerization. For data, in order to protect patients, we collaborate as much as possible, while always being sure to adhere to the HIPAA (i.e., data may require an MTA). In this way we hope to reduce the barriers to the use of medical image processing in clinical and basic science studies, and also that our collaborators and other domain experts can efficiently develop, evaluate, and deploy our methods for diverse anatomical and clinical scenarios using big imaging data.

3. What did you ask yourselves when preparing your software to be shared as open source?

The main question we asked was: how can we make this code as reproducible as possible and as easy to run as possible? We concluded that the answer was to provide the source code and make it available in a Singularity container.

4. How do you think we might encourage researchers in the MRI community to contribute more open-source code along with their research papers?

Providing open-source code needs to become the standard for research in the next 5-10 years, in order to promote reproducible science. In this vein, providing containerized code, especially for newly published pipelines, may serve as a good starting point. Wide adoption would facilitate the running of published code by scientists who can “hit the ground running” when attempting to reproduce other authors’ work.

Questions about the specific reproducible research habit

1. What advice do you have for people who would like to get started with Singularity in their own projects?

When making your first container, there’s no need to make it “pretty”. One powerful capability built into Singularity is that the container is essentially a computer. This means that you can shell into it just like a computer—you can even install a VNC server inside it and visualize a GUI desktop. In essence, if you treat it like a computer in which you’re installing libraries / dependencies / your own code, then it’s just like setting up any other computer. Once you’ve done that and learned the ins and outs of working with containers, making it “pretty”, like with a recipe or definition file, becomes much simpler.

2. Why was it important for you to make your pipeline compatible with datasets that are in the BIDS format?

BIDS is quickly becoming the standard in the field for organizing neuroimaging data. It was important for us to be able to best support our colleagues who work with data in this format.

3. What considerations went into ensuring that this software can be used, maintained and/or improved in the long term (e.g., on the user or developer side)?

On the user side, we opted to go with containerization not just for ease of installation and running, but also because the container acts like a “snapshot” in time. It freezes all software dependencies and so on, meaning that for the next 5-10 years, updates to libraries and the like should not significantly affect the users’ ability to run the code.

On the developer side, the code was written to be as modular as possible, with each step in the pipeline being contained in its own function; in this way, data are simply passed between them. We hope that this will make it easier in the future, both for us and our colleagues, to add/remove modules as the field of DWI preprocessing develops.

4. Are there any other reproducible research habits that you didn’t use for this paper but might be interested in trying in the future?

For this paper we shared all the code. This made a lot of sense to us, as it was a methods-driven paper. In the future, we think it would also be best to de-identify and share data as much as possible too—within the constraints of protecting patient information. This should facilitate better reproduced science and improved quality of work. We are taking this challenge on with our MASiVar dataset, which has already been characterized and accepted for publication in MRM (link).