By Mathieu Boudreau

This MRM Reproducible Research Insights interview is with Yaël Balbastre and Martina F. Callaghan, researchers at the Wellcome Centre for Human Neuroimaging at UCL in London. Their paper is entitled “Correcting inter-scan motion artifacts in quantitative R1 mapping at 7T”. Their article was chosen as this month’s Highlights pick because it demonstrated exemplary reproducible research practices; in particular, the authors shared their code, integrating it in an open-source toolbox (hMRI), and also shared script that reproduces all their simulation figures. To learn more, check out our recent MRM Highlights Q&A interview with them.

General questions

1. Why did you choose to share your code/data?

To help others to use the methods we were reporting and to recreate our results as simply as possible.

2. What is your lab or institutional policy on sharing research code and data?

We are hugely committed to open science. The Statistical Parametric Mapping (SPM) neuroimaging analysis package created and developed in our department (UCL’s Imaging Neuroscience Department / The FIL / The Wellcome Centre for Human Neuroimaging) has been available to the community as open source with a GNU General Public License since the nineties. Following in this tradition, we always seek to make other code we create as open and accessible as possible, and that includes the hMRI toolbox, which is a plugin for SPM.

While we do also share data, this can be trickier because of the need to respect anonymity/data protection regulations (mostly structural images), and/or because of the complexity of paradigms (functional images).

3. At what stage did you decide to share your code/data? Is there anything you wish you had known or done sooner?

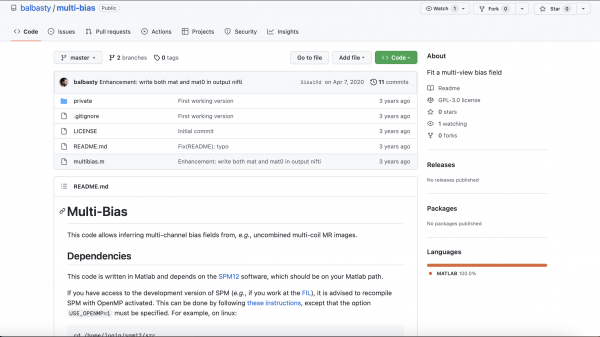

The code for the main methodology was developed on GitHub in a public repository. Actually, this repository was public before the paper was even written. The sharing of the simulation code was motivated by the valuable revision process at MRM, and this code was made available on a separate GitHub repository that we use for isolated code snippets/simulations associated with our papers.

4. How do you think we might encourage researchers in the MRI community to contribute more open-source content along with their research papers?

Initiatives like this, highlighting the practice, will hopefully make people think more about sharing, as might explicitly requesting sharing as part of the submission process. It may be that the more people benefit from open-source content, the more they will consider sharing. Ensuring that code is recognized as an important scientific output also from the perspective of career advancement is also very important, and might help us to move away from the publication-driven mentality.

Questions about the specific reproducible research habit

1. What advice do you have for people who would like to contribute to established software projects like the hMRI toolbox?

We would be very happy for people to contribute. We are a small team but have regular monthly meetings where we try to make the toolbox more modular and, by incorporating unit tests and also providing templates for new users to use, easier to contribute to. A publicly available data set also allows people to test that they don’t break functionality and allows us to perform integration testing. We review pull requests at our monthly meetings, so you may sometimes have to wait a little bit for a response — please be patient with us!

However, thanks to funding from the Max Planck Society, we are now recruiting someone to work specifically on the toolbox, which will help to accelerate our efforts considerably. If you are an experienced MATLAB software developer and would be interested in joining us, please see our advert here.

2. What considerations went into ensuring that the code and data you shared can be used, maintained and/or improved in the long term (on the user and/or the developer side)?

For the hMRI toolbox, we have a mailing list to provide support to users. Developers can contribute via GitHub. Anyone is welcome to raise issues — letting us know about problems they encounter or enhancements they would like to see implemented.

3. What practical advice do you have for people who would like to write code that creates reproducible figures to be shared along with their paper (as you did with your simulations)?

Make sure that someone else tests them so you know they can work on different systems. This will also tell if you have inadvertently included any hard links/local dependencies/confusing documentation.

4. Are there any other reproducible research habits that you didn’t use for this paper but might be interested in trying in the future?

In my team, we are not formally trained in software engineering, and so effectively we are “learning on the job”. We benefit greatly from being part of the hMRI toolbox development consortium and from large scale open-source initiatives like Gadgetron. We learn a lot by collaborating with people who really do know about software development, and we try to adopt good habits all the time — unit testing, code repositories, Docker containers, etc. We will try to keep on learning and improving our practices!