By Mathieu Boudreau

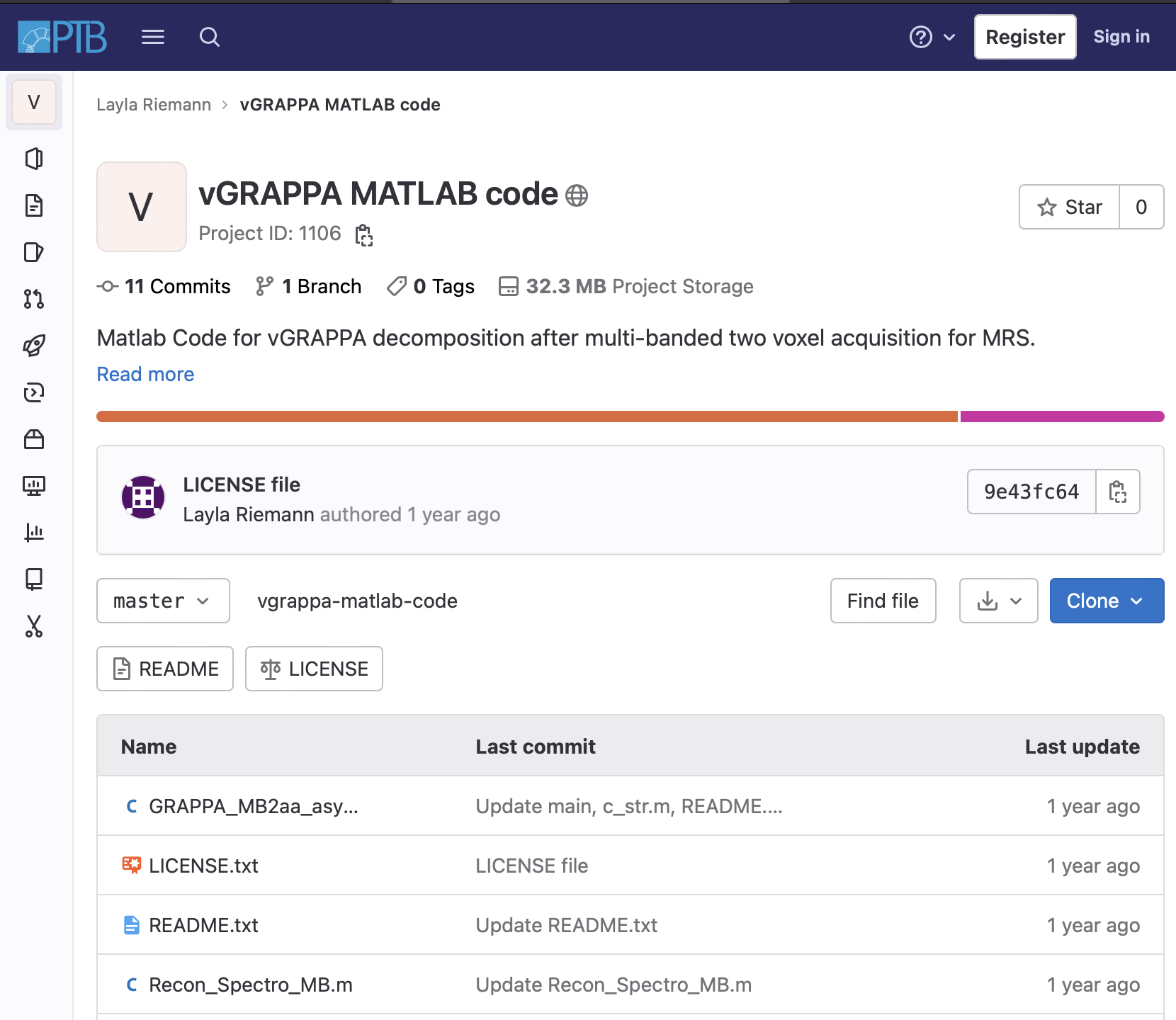

This MRM Reproducible Research Insights interview is with Layla Tabea Riemann and Ariane Fillmer from Physikalisch-Technische Bundesanstalt (PTB) in Berlin, first and last author of a paper entitled “Fourier-based decomposition for simultaneous 2-voxel MRS acquisition with 2SPECIAL”. This work was chosen as this month’s Highlights pick because it demonstrated exemplary reproducible research practices; specifically, the authors shared example data, their vGRAPPA algorithm code, and a demo script, all packaged in a nice GitLab code repository. To learn more, check out our recent MRM Highlights Q&A interview with Layla and Ariane.

General questions

1. Why did you choose to share your code/data?

I think that publicly funded research belongs to the public, and should be accessible without a paywall or anything like that. Along the same lines, we have an obligation to make the most of data or code that was acquired or developed, respectively, using public funds. Moreover, I don’t see any point in developing stuff and using all that time and energy, only to have it vanish into a drawer because it is too hard to access and too difficult to reverse engineer. Also, reinventing something that other people have already done is a waste of time and money. We wouldn’t make any advances at all if everybody kept their developments under wraps.

2. What is your lab or institutional policy on sharing research code and data?

In the case of in vivo data, it is obviously a bit trickier for ethical and data protection reasons, but in general at PTB it is encouraged to share data and code. That said, there has been a shift with acquired in vivo data, and so we were able to share in vivo data from the repeatability measurements that were acquired for Layla’s first paper. I think it is really valuable to make these kinds of data available publicly, because in this case in particular the acquisition was very time consuming and expensive; but also, because these data, together with the statistical framework, allow you to validate different postprocessing methods without having to first invest in the acquisition of such a data set.

3. At what stage did you decide to share your code/data? Is there anything you wish you had known or done sooner?

To me it was always clear that it would be desirable to share the code and data if possible. I really like the initiatives of MRM and the ISMRM that strongly encourage data sharing, such as the spectroscopy study group had a poster competition open only to contributions that demonstrated good reproducible research practices, such as code sharing. Due to this poster prize competition, the code for 2GRAPPA ended up being published way sooner than it would otherwise have been, since normally I would wait to share it until the paper was being published.

4. How do you think we might encourage researchers in the MRI community to contribute more open-source content along with their research papers?

I do think that some great steps in the right direction have already been made, as I said before. There are the study group prizes, for example, and the fact that manuscript reviewers are asked to say whether a study demonstrates good reproducible research practices, and so on. However, since publications are the scientific currency, I think it is important for the society to treat published data sets and published code on a par with the publications themselves, and not just as “attachments”. This would show that there is proper appreciation of the considerable work, time, and effort that went into producing them. Especially with artificial intelligence and machine learning now picking up pace, I do see a gross imbalance in paper output emerging between people who develop algorithms to analyze data, and people who actually spend months or years in acquiring data and making sure these data adhere to a certain minimal quality standard (usually quite high). If a published high-quality data set is treated as a high-quality publication, junior researchers spending time acquiring data may have a greater incentive to publish them.

Questions about the specific reproducible research habit

1. What advice do you have for people who would like to use tools like GitLab for version control and sharing code?

People who use these tools to collaborate should make sure that not everybody gets to pull, push, and merge things, or at least make sure that one person checks it beforehand. Otherwise, things will get messy very easily. It’s also necessary to make sure that collaborators have a somewhat similar coding style. Moreover, code needs to be properly commented, so it is easier to understand the thought process, and hence, how to use it.

2. What questions did you ask yourselves while you were developing the code that would eventually be shared?

We asked ourselves how we should comment on the code and what variable names could be used to make it easy for others to understand.

3. How do you recommend that people use the project repository you shared?

I would recommend they look at the comments to figure out the specific order in which the data need to be put into the algorithm. This will likely differ a bit for different scanners and sequences.

4. Are there any other reproducible research habits that you didn’t use for this paper but might be interested in trying in the future?

The following does not really concern future projects, but another project done by our group. We wrote a paper (MRM 11/2021 https://onlinelibrary.wiley.com/doi/10.1002/mrm.29034) in which we investigated in great depth the reproducibility and repeatability of spectroscopic acquisitions, also shining a light on the reproducibility habits of MR spectroscopy research. In that paper, we published not only the code used, but also the anonymized acquired data, to allow its broader use within the MRS community.

As our group is quite small, so far we have never worked with big collaborative research tools, such as Jira boards and things like that, where everyone has defined tasks, sub-tasks, and time frames (and also sees those of the other members of the group). For big projects, this enables you to keep track of everything and enhances combined productivity, as well as reproducibility, and it is something to think about with regard to future projects.